Protecting Metrics. Ignoring Safety.

Why is Snapchat on the 2026 Dirty Dozen List?

Snapchat is a tool of choice for sextortionists, sex traffickers, and child abusers — and the company knows it. Internal documents and whistleblower accounts reveal that reports of abuse have often gone ignored, critical safety fixes were dismissed to protect engagement metrics, and features like My AI have promoted statutory rape. The technology exists to stop this, yet Snap chooses to look the other way.

Internal documents and whistleblower accounts suggest that Snapchat has consistently deprioritized user safety in favor of growth and engagement metrics.

The Problem

Snapchat remains a prime tool for sextortionists, sex traffickers, and child abusers to accomplish their crimes. And it’s no surprise.

Internal documents and whistleblower accounts suggest that Snapchat has consistently deprioritized user safety in favor of growth and engagement metrics.

- A June 2025 Thorn report found the top platform identified by sextortion victims was Snapchat (37%).

- According to documents from a lawsuit by the state of New Mexico, internal data from Snapchat shows they were receiving around 10,000 user‑reports of sextortion per month. Yet many reports apparently went unresolved or uninvestigated.

- A former Snapchat employee said Snapchat doesn’t take safety seriously, commenting “I left because of that. It was an ethical reason why I left…[Snapchat] started out as a sexting app, and they want to keep that. That kind of stuff keeps all these young kids on the platform.”

- Snapchat’s “My AI” chatbot was exposed for inadequate safety testing, as internal engineering managers warned the AI “can be tricked into saying just about anything” and after it was launched it was caught dispensing dangerous advice to minors, such as how to conceal alcohol and drugs or pursuing sexual encounters with adults.

- According to the NM lawsuit, safety enhancements flagged as critical by internal teams have been often dismissed by upper management due to concerns over “disproportionate admin costs” or potential impacts on user retention.

- In some documented cases, even accounts with dozens of reports (such as “75 complaints mentioning nudes, minors, and extortion”) remained active for long periods.

Snapchat’s intentional features and design choices have made it a hub for exploiters. The platform’s disappearing messages feature, marketed as a privacy tool, has been weaponized by predators to coerce minors into sending explicit content, which is then saved or screenshotted for blackmail. Snap’s own research reportedly confirmed:

“One-third of teen girls and 30% of teen boys reported being exposed to unwanted contact on Snapchat in 2022. Internal surveys from Snap revealed that over half of Gen Z users or their friends had experienced catfishing, and many were victims of sextortion. Rather than confront and address these alarming realities, Snap chose to ignore user reports. Alarmingly, one internal investigation concluded that 70% of victims had not reported their abuse because they knew no action would be taken by Snap; indeed, of the 30% that did report, none were addressed.”

Progress Remains Slow and Ineffective

While Snapchat has introduced some safety measures, such as warnings for messages from strangers and restrictions on friend requests, these solutions fall far short of addressing the platform’s systemic issues. Many of these features rely on users recognizing risks and taking action, which is unrealistic for minors who may not fully understand the dangers or feel pressured to not report abuse. Age verification remains weak, allowing underage users to access the platform and predators to pose as young peers. Parental controls are limited and optional, leaving many families without the tools needed to protect their children and leaving children without the privilege of involved and tech-savvy guardians completely on their own. Even reporting tools, though improved, depend on victims overcoming shame and fear to report abuse—an unlikely scenario for many and one that only occurs after a degree of harm has already happened — which is why Snapchat should be doing more prevention to stop the harm from taking place to begin with.

“Snap knowingly incorporates design features that facilitate and enable the distribution of drugs and the sexual exploitation of children, including the distribution of CSAM, child sex trafficking, child pornography, and child predatory activities. These features include ephemeral content, personalization algorithms, Snap Map, and My AI.”

— complaint filed by the Utah Department of Commerce / Utah Office of the Attorney General against Snapchat / Snap, Inc.

It’s Time to Be Honest

The systemic and historic problems at Snapchat are no accident. The unsafe nature of this platform is because of Snapchat’s business model and design philosophy. Make no mistake: this is a platform that, in its very architecture, appears to intentionally allow the creation and sharing of sexually explicit material, including of minors. It appears that if Snap truly prioritized child safety, it would immediately deploy existing technologies to block explicit content creation and sharing, cutting off the tools predators rely on. Yet it hasn’t. Every day, sextortion, child sexual abuse material, and images used to blackmail and traffic children continue to proliferate — a direct result of deliberate design choices.

The tech to stop this exists. Snap’s failure to act is a conscious and knowing decision that leaves children exposed to grave harm.

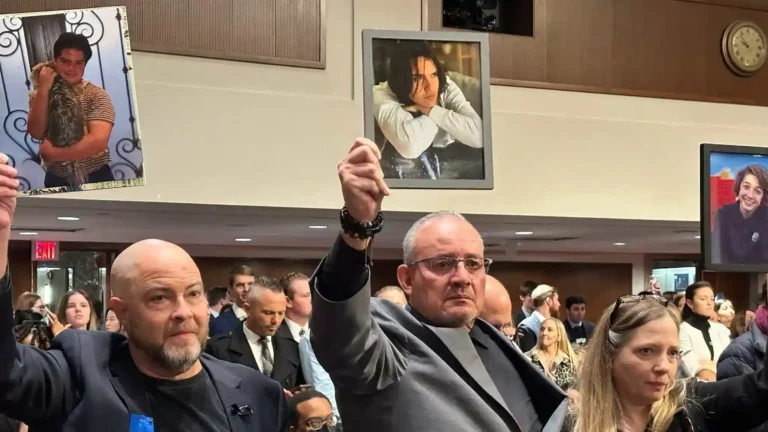

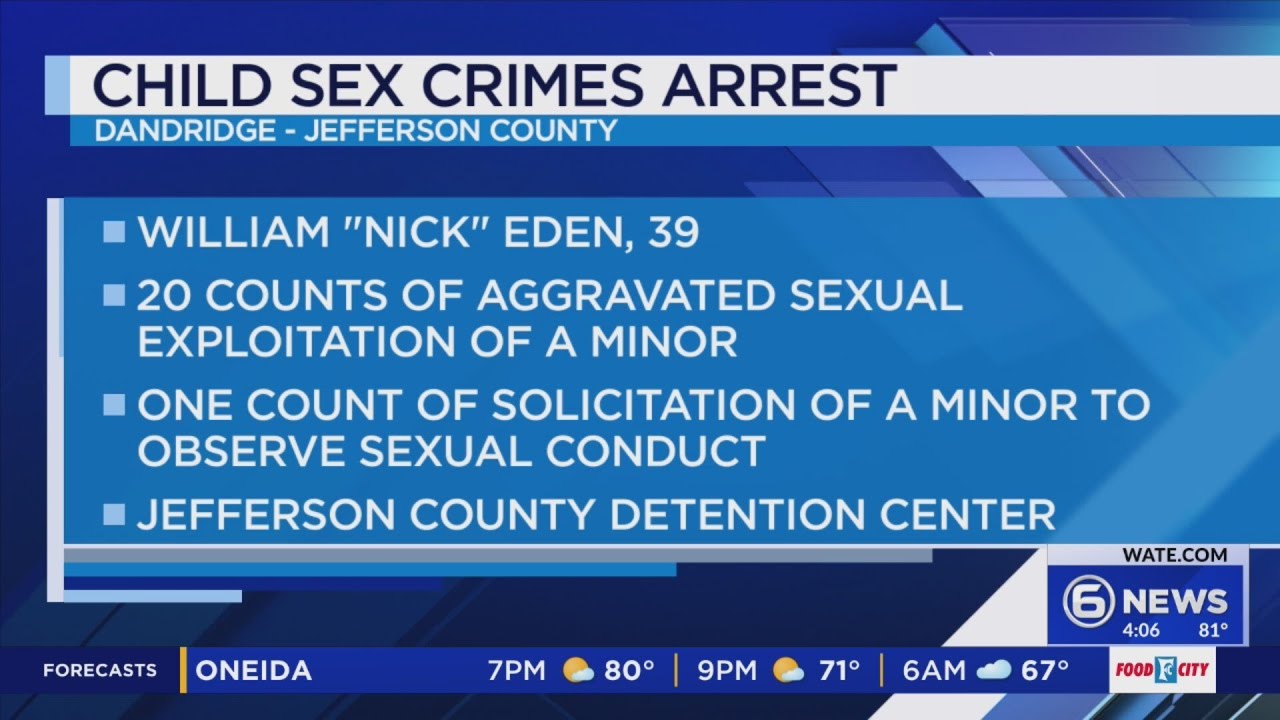

Proof: Evidence of Exploitation

WARNING: Any pornographic images have been blurred, but are still suggestive. There may also be graphic text descriptions shown in these sections. POSSIBLE TRIGGER.

Sextortion on Snapchat

It’s important to note that Snapchat’s feature allowing the ephemeral creation/distribution of pornography is a welcome mat for sextortionists.

Below is a non-exhaustive sampling of cases documented in 2025. These and other sexual exploitation issues on Snapchat are historic problems essentially tracing back to the origin of the app, due to both its design and its chronic inability or unwillingness to prevent and moderate risks for exploitation.

- Lacrosse Coach, 20, Allegedly Used Snapchat to ‘Sextort’ at Least 30 Boys, Including a 13-Year-Old Victim—People

- A former New Jersey high school lacrosse coach allegedly posed as a teenage girl on Snapchat to sextort at least 30 boys, some as young as 13, coercing them into sending nude photos and videos, then threatening to expose the images unless they paid or sent more explicit content. Victims were left terrified, depressed, and suicidal; one 13-year-old attempted suicide amid escalating demands and threats to ruin his life. Snapchat was the central tool: fake female accounts initiated contact, received images, delivered threats through disappearing messages, and collected payments via the app’s Cash App feature, exploiting its perceived privacy to torment dozens of boys across states.

- Wayne County Man Accused of Paying 13-Year-Old Girl for Nude Photos on Snapchat—Click One Detroit

- In this case, a Michigan adult allegedly used Snapchat to pay a 13-year-old girl for nude images, even after she made her age clear. “The investigation began after a 13-year-old girl from Arizona, along with her parents, reported to police in February 2025 that two different people were paying her to send nude images of herself through social media apps… When investigators reviewed [the man’s] phone, they saw hundreds of images and videos from his ‘hidden’ folder that court documents claim ‘appeared to have been deleted when law enforcement began knocking on the door.’ The folder contained sexually explicit images of [additional] young girls, with some downloaded from Snapchat.”

- British teenage boys being targeted by Nigerian ‘sextortion’ gangs, using Snapchat & Instagram—ITV

- Transnational criminal gangs — often claiming to be young women — used Snapchat and other platforms to trick teenage boys (some as young as 14) into sending explicit images. Then they demanded money (about £100) under threat of exposure to friends, family, or school. The surge in cases prompted a formal warning from the Crime Agency and a national awareness campaign — showing how Snapchat’s social-media reach and messaging ease can be weaponized for sextortion.

- UK police launch campaign after more than 110 child sextortion attempts reported monthly—The Guardian

- The National Crime Agency (NCA) announced that police forces in the UK were receiving over 110 reports of child sextortion attempts per month in 2024 — many involving Snapchat or similar platforms. The prominence of Snapchat in these campaigns illustrates how the platform’s design and popularity among youth make it a frequent destination for exploitation.

- Sextortion & grooming threats toward an 8-year-old via Snapchat—Sky News

- A predator reportedly created fake accounts posing as pupils at a primary school and used Snapchat to target an eight-year-old girl. The predator allegedly demanded sexual videos and pictures, threatening to hack phones and post images online. This case — involving a very young child — underscores the extreme vulnerability of minors and how Snapchat (with fake-account capability and private messaging) can be abused for sextortion and grooming even of elementary school–age children.

- Snapchat sextortion of N.J. child and adult traced to Texas man, investigators say – NJ

- A Texas man was arrested for sextorting a child and an adult in Bergen County, New Jersey, over several months, inflicting severe psychological trauma. The scheme involved using fake accounts—impersonating another person—to initiate contact, coerce the victims into recording and sending explicit photos and videos of sexual acts, and then weaponize those materials with blackmail demands to force compliance and further degradation. Authorities traced the activity to his Dallas home, where he faces charges including first-degree production of child pornography, aggravated sexual extortion, and impersonation, and is detained pending extradition to New Jersey.

- Nurse in Marrakech detained for alleged sextortion using Snapchat—Yabiladi

- A nurse at a private clinic in Marrakech’s Oudaya prison was detained and remanded in custody on charges of inciting corruption, soliciting prostitution, and producing/distributing pornographic materials, after allegedly using Snapchat to lure at least one victim—though the investigation suggests more—into sharing custom explicit videos tailored to her interests. The nurse then extorted payments like 1,500 dirhams for fabricated “beautification and travel expenses,” before blocking her victims and vanishing. This behavior inflicted profound emotional distress, betrayal, and vulnerability to further digital fraud on the deceived individual.

A report from Thorn and NCMEC released in June 2024, Trends in Financial Sextortion: An Investigation of Sextortion Reports in NCMEC CyberTipline Data, analyzed more than 15 million sextortion reports to NCMEC between 2020 and 2023. The report found:

- Snapchat (16.7%) is the #2 platform where perpetrators actually distributed sextortion images out of reports that confirmed dissemination of the images on specific platforms, following Instagram (81.3%).

- Snapchat (31.6%) is the #2 platform where perpetrators initially contacted victims for sextortion, following Instagram (45.1%).

- Snapchat (35.8%) is the #1 secondary platform mentioned when sextortion victims were moved from one platform to another.

- Despite Snapchat being mentioned nearly as often as Instagram and far more than Facebook in public reports of sextortion, Snapchat’s direct report volume to NCMEC is almost half that of Facebook and a quarter as many as Instagram, though their report volume improved in the latest (August 2023) data sample.

**Previous years of proof available upon request

About Sextortion: A Serious Danger for Children and Adults

Sextortion is the use of sexual images to blackmail the person depicted in those images. It often encompasses a financial element as well, where predators demand money, threatening to publish explicit images of children if they do not comply.

Teenage boys account for 90% of financial sextortion cases involving minors.

On average, there were 812 reports of sextortion per week to the National Center for Missing and Exploited Children (NCMEC) in the last year of data analyzed, with more than two-thirds of these reports appearing to be financial sextortion, according to a joint NCMEC and Thorn report.

In 2024, 1 in 4 young people (24%) reported having personally experiencing sexual extortion as a minor, including 1 in 5 (20%) who were teens at the time of the survey and 1 in 3 (31%) who were young adults at the time of the survey, according to an online survey of 1,200 young people ages 13-20 in the U.S.

One of the most severe consequences of sextortion is suicide – there have been at least 36 sextortion cases that have resulted in suicide since 2021. The speed at which sextortion can escalate (sometimes within hours between the first contact and suicide) is an example of why we need mass-scale prevention and safety by design – we need these online platforms to do more to prevent strangers from contacting kids because often there is not time to wait for the platform to respond to a report before significant harms occur. We need platforms to take preventative action, not just reactionary.

We must also recognize that adults are victims of sextortion. A survey across 10 countries found that 1 in 7 adults have been threatened with the distribution of sexual images depicting themselves. Adult victims are often underreported, silenced by shame and fear of social consequences, yet they are particularly targeted for their financial resources. Further, when these individuals hold sensitive government positions, sextortion escalates into a national security threat.

Learn more here:

Sex Trafficking and Child Sexual Abuse Material (CSAM) on Snapchat

Snapchat remains a premiere destination for sex traffickers and predators to create/produce and distribute child sexual abuse material (CSAM), and also to groom and extort people into sex trafficking.

It’s important to note the intrinsic connection between Snapchat’s features allowing the ephemeral creation/distribution of pornography and the ability for predators to coerce children to generate CSAM, which is then often used as blackmail to further coerce or manipulate them into either continued CSAM production, sex trafficking, or other abuses.

Below are a mere non-exhaustive sample of cases documented in 2025. These and other sexual exploitation issues on Snapchat are historic problems essentially tracing back to the origin of the app due to both its design and chronic inability or unwillingness to robustly prevent and moderate risks for exploitation.

**Previous years of proof available upon request

- “Missing Louisiana girl, 13, rescued from box in Pennsylvania” — The Guardian

- Police said the suspect met the 13-year-old through Snapchat and then transported and imprisoned her. Authorities charged the suspect with human trafficking and sexual assault; Snapchat was the initial contact platform used to recruit the victim.

“This child … was groomed, exploited and then sexually abused by strangers who found her online,” Louisiana’s attorney general, Liz Murrill, said. “This is just one example of the dangers of social media and of human trafficking.”

- Police said the suspect met the 13-year-old through Snapchat and then transported and imprisoned her. Authorities charged the suspect with human trafficking and sexual assault; Snapchat was the initial contact platform used to recruit the victim.

- “Three Men Arrested for Sex Trafficking Minor Girl in Bucks County” — CSE Institute

- Law enforcement reports three men used Snapchat to communicate with and recruit the 13 year old girl and to coordinate meetings; charges included sex trafficking, unlawful contact, and dissemination of CSAM.

“[One man] posed as a 17-year-old [on Snapchat] and solicited multiple sexually explicit photos and videos from [the victim.] Over time, [the man] allegedly coerced the girl into creating a Grindr profile, instructing her to list a false age of 18… After the profile was created, reports indicate that her alleged trafficker arranged meetings with three men in Pennsylvania… An additional sexual assault was allegedly recorded during a Snapchat video call…”

- Law enforcement reports three men used Snapchat to communicate with and recruit the 13 year old girl and to coordinate meetings; charges included sex trafficking, unlawful contact, and dissemination of CSAM.

- Hays County man accused of trafficking minors via Snapchat – Houston Chronicle

- Police say the suspect used a Snapchat account to solicit nude images and request sex from minors, offering alcohol, nicotine, and drugs in exchange. Snapchat was used as the primary communication and recruitment platform for the trafficking crimes.

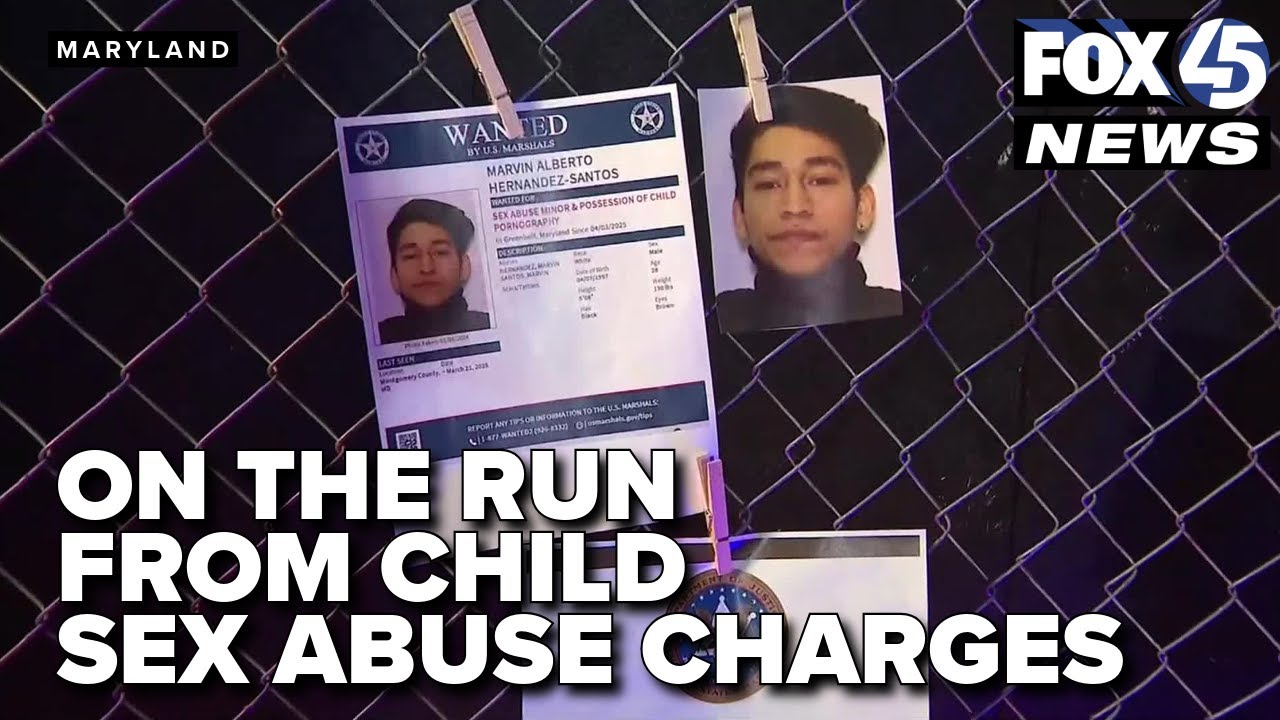

- Maryland Man Sentenced to 14 Years in Prison for Sexually Exploiting a Minor on Snapchat – U.S. Department of Justice

- A Rockville, MD man coerced a 15-year-old via Snapchat to send sexually explicit images and later met the victim for in-person sexual abuse. Snapchat was used to initiate grooming, send explicit images, and coordinate meetings.

- Vernon Man Who Enticed Minors to Send Him Explicit Images on Snapchat Sentenced to 7 Years — U.S. Department of Justice

- A Connecticut man used Snapchat to solicit explicit photos and videos from minor girls, sometimes offering money through Cash App. He also sent explicit images of himself to minors. Convicted of receiving child sexual abuse material.

- Ohio Man Sentenced to 30 Years for Luring Minor Girls into Sending Explicit Content on Snapchat – U.S. Department of Justice

- From 2022–2024, the defendant used Snapchat while pretending to run a “modeling agency.” He targeted hundreds of girls ages 12–15 nationwide, convincing them to send sexually explicit content in exchange for money or “modeling opportunities.” Investigators identified CSAM from more than 90 minors.

- “Bay City man, 21, charged in sex trafficking, child porn” — Midland Daily News

- A 21-year-old man was federally indicted on 11 counts, including sex trafficking and child pornography production involving five minors. Snapchat was used to extort a victim by sending a sexual photo and threatening to ruin their reputation unless they complied. While the indictment notes broader online distribution of child pornography, Snapchat is explicitly cited only for this extortion incident. This underscores how Snapchat facilitates anonymous, rapid threats in sex trafficking and CSAM schemes.

- Lorton man sentenced to 18 years in prison for sexually exploiting children on Snapchat – Department of Justice

- The defendant used Snapchat between at least May 1, 2021 and May 8, 2024 to find minor girls (some as young as 9), entice them to send sexually explicit images/videos, and collect more than 1,000 CSAM files across multiple devices. Investigators found he used different Snapchat accounts — including a catfishing approach — to trick minors.

- Former Army Private Sentenced to 22 Years in Prison for Child Sexual Exploitation Crimes Involving Girls He Met on Snapchat – Department of Justice

- The defendant was convicted after a jury trial for production of child pornography, receipt and possession of child pornography. Prosecutors said he used Snapchat to receive CSAM of a 14-year-old (initial contact when she was 13), and to receive explicit material from a 15-year-old.

- West Los Angeles Man Sentenced to 40 Years in Prison for Using Snapchat to Entice Children into Producing Sexually Explicit Videos – Department of Justice

- The defendant used Snapchat to meet children, entice them to engage in sexually explicit video-chats, which he secretly screen-captured and saved. He pled guilty (in 2024) to production of child pornography and enticement of a minor to engage in criminal sexual activity.

“After his victims sent him sexually explicit content, [the man] would sometimes demand additional sexually explicit images and videos from them. Wallin also threatened to publish or otherwise expose the prior pictures and videos sent by the victim or created by defendant if the victim did not comply with his demands. For example, in February and March of 2020, [the man] enticed a victim, who was approximately 9-10 years old at the time, to produce sexually explicit images and videos used on the social media app Snapchat. [The man] admitted in his plea agreement to knowingly causing at least four additional victims – ranging in age from 12 to 16 years old – to produce multiple files of child pornography.”

- The defendant used Snapchat to meet children, entice them to engage in sexually explicit video-chats, which he secretly screen-captured and saved. He pled guilty (in 2024) to production of child pornography and enticement of a minor to engage in criminal sexual activity.

New Mexico and Nevada Sue Snapchat for Enabling Exploitation, Deceptive or Ineffective Safety Tools, and More

New Mexico AG Raúl Torrez: Lawsuit Alleging Snapchat Enables Sextortion and Child Sexual Exploitation — New Mexico Office of the Attorney General

Date: Sep 5, 2024 (press release / complaint filings).

The New Mexico AG’s complaint alleges Snapchat’s design and policies facilitated sextortion and sexual exploitation — claiming minors report more sexual interactions and that more trafficking victims are recruited on Snapchat than other platforms.

The alleged harms laid out in the case are summarized to include:

- “Implementing design features and policy choices that fail to ascertain or apply the actual age of users

- Preventing effective parental controls and reporting mechanisms

- Permitting predators to identify, contact, groom, and extort children and to develop CSAM through these contacts

- Designing algorithms and features that connect child sex predators to children and allow predators to find target victims

- Creating a virtual market for marketing and selling illegal drugs and guns to children

- Failing to warn and affirmatively misleading parents and children about the presence of sex trafficking, sexual exploitation content, and drug and gun sales on the platform

- Failing to report CSAM

- Using features like ephemeral content, such as “streaks,” that reward compulsive use of Snapchat

- Aggressively sending notifications of new content to users”

It was also reported: that the AG office set up a decoy account and swiftly encountered sexually exploitive messages and contacts:

The suit maps out the many ways that Snapchat has allegedly become a “breeding ground” for child predators, as demonstrated by an undercover investigation conducted by New Mexico’s Department of Justice. The office first set up a decoy account for a 14-year-old girl with the username Sexy14Heather (“Heather”), initially listing her sign-up age as 18, but later modifying it to a minor’s account. Within a day of merely searching for other 15-year-olds on the app, and without adding any other users, Heather received a friend request from Enzo (Nud15Ans), who swiftly requested they exchange anonymous messages off Snapchat through a ngl.link. After this single exchange, and despite her account being private, Snapchat suggested nearly one hundred other users to Heather, including other adults who sought to exchange sexually explicit content. An additional search that suggested Heather was looking for other users under 18 led to continued recommendations for explicit accounts with usernames like “naughtypics,” “gayhorny13yox,” and “teentradevirgin.” And despite never directly engaging with them, the decoy account received push notifications that referenced even more explicit content.”

Nevada AG Aaron D. Ford: Lawsuit Targeting Snapchat’s Design and Corporate Practices as Hazardous to Children

Nevada Attorney General Aaron Ford launched lawsuits on January 30, 2024, in state court against Snapchat and other social media giants, accusing them of deceptive trade practices and negligence for designing addictive platforms that exploit children’s developing brains for profit; Snapchat, in particular, is branded an “addiction machine” akin to an illegal drug, engineered to demand constant engagement—even during driving or meals—through manipulative features that maximize youth use and emotional hooks, fostering severe harms like sexual exploitation, auto accidents, drug overdoses, suicides, and eating disorders.

The complaint highlights how Snapchat’s ephemeral messaging and camera-first interface lure teens into constant interaction, enabling risks such as sextortion via disappearing threats and private sharing of explicit content that evades easy detection, all while prioritizing revenue over safety.

It alleged:

- Snapchat (and other platforms) allegedly designed to exploit children’s psychological vulnerabilities and encourage addictive use.

- Disappearing content, endless browsing, push notifications, streaks, and other features are framed as manipulative.

- Alleged deceptive marketing claiming teen safety while exposing minors to sexual exploitation risks.

- This lawsuit is framed as public‑health intervention, highlighting systemic exposure to harms including sexual exploitation.

Utah Sues Snapchat for its "My AI” Bot Promoting Statutory Rape, and Other Features Facilitating Sextortion and Exploitation

Utah AG Derek Brown: Lawsuit Alleging Snapchat’s AI and Platform Features Facilitate Sextortion and Exploitation of Minors — —Statement

The Utah AG asserted that:

“Snapchat is designed to steal time and attention away from teens at the expense of their development, health, and welfare. This complaint brings three separate counts, alleging that:

- Snap designed addictive and dangerous features into its platform to exploit children’s psychological vulnerabilities for financial gain, constituting an unconscionable business practice under state law.

- Snap publicly positioned itself as a safe alternative to traditional social media while deceiving users and their parents about the platform’s safety and the resources Snap committed to protecting them.

- Snap is violating the Utah Consumer Privacy Act by not informing consumers about its data collection and processing practices and failing to provide users or their parents with an opportunity to opt out of sharing sensitive data, such as biometric and geolocation information.”

Examples from the Complaint include:

- “Snap publicly advertises “extra protections” for teens and an “age-appropriate content experience,” but DCP’s investigation found the experience in a 13-year-old and 15-year-old test account to be filled with highly sexual material, including recommending videos from OnlyFans models and other models in stages of undress. (lines 223-229)

- Snapchat has become a virtual market for drug cartels, with Utah officials arresting a drug dealer running a “truly massive” drug ring through Snapchat in 2019. This ring openly advertised narcotics and arranged deals on the platform, linking black market THC cartridges to “almost every high school in the Salt Lake valley”. (line 211)

- The complaint identifies several gambling-like design features that exploit children’s psychological vulnerabilities, including ephemeral messages, Snapstreaks, push notifications, beauty filters, personalization algorithms, and Snap Map. (line 5)

- Snap’s introduction of “My AI,” a virtual chatbot, is criticized for lacking proper testing and safety protocols, and for giving misleading or harmful advice to underage users, including how to hide alcohol and drugs or set the mood for a sexual experience with an adult [i.e. statutory rape]. (lines 8-9)

- Snap also uses dark patterns to extract information from children using My AI. Dark patterns are design features that encourage users to use the feature. My AI is prominently placed at the top of the user’s chat, even before the user’s real, human friends, and is automatically enabled for all Snapchat users. My AI cannot be removed from the app (line 145)

- My AI collects user geolocation data even when “Ghost Mode” is activated, and Snap fails to clearly disclose this or the involvement of OpenAI in data processing. (lines 151-152, 154, 156, 160)”

It also asserts in the public complaint:

- “Snap’s lax age-gate policy protects no user against predators who pose as minors to groom and extort children. As the company knows, predators nearly always pose as young children to gain the trust of children and teens. By refusing to verify ages, Snap knowingly enables predators to impersonate children and sexually exploit victims.”

- “Snap does not consistently terminate accounts when its systems discover a user under 13.”

- “Snap’s claims about using strong detection tools are inaccurate. The company has consistently underinvested in enforcement and detection, resulting in significant backlogs and massive amounts of harmful videos being recommended to users…”

- “The Utah Division of Consumer Protection investigated Snap’s claims about providing an “age-appropriate” viewing experience for teens. While using test accounts with reported ages set to 13 and 15, the investigation found that the experience for Utah users in these age groups is highly sexual and not age-appropriate—contradicting Snap’s representations. Videos recommended to the accounts included a woman in a sexually suggestive pose with text stating “when he’s tired after the 2 first rounds”; videos made by Only Fans models with sexual innuendo like “need a big boy?”; a video of a man undressing in a car; and a photo of an apple bong with marijuana.”

- “The in-app reporting function is neither easy to use nor effective.”

Requests for Improvement

NCOSE holds that Snapchat is too dangerous for children and believes the minimum age for users should be at least 16, if not older. In addition to this, we recommend Snap make the following changes immediately.

Automatically detect and block sexually explicit images

Automatically detect and block sexually explicit images, especially when sent to or from minor accounts. Provide clear warnings, resources, and prompts to report, block, or remove offending accounts. Notify minors attempting to send nude images about the risks and prevent the image from being sent. Bumble, Apple, Google, and Discord use some form of technology to proactively blur sexually explicit images before they’re viewed—the technology exists, this is possible.

Proactively identify, remove, and block accounts and bots that are posting and promoting pornographic content

Proactively identify, remove, and block accounts and bots that are posting and promoting pornographic content (whether publicly or in direct messages) and/or selling sex with greater efficiency.

Fix age verification loopholes

Fix age verification loopholes. Users can lie about their age during signup without robust checks, allowing underage kids to access adult-oriented features or adults to pose as teens, facilitating initial contact and grooming.

Halt minors access to friend-finding mechanics beyond existing phone contacts

Halt minors access to friend-finding mechanics beyond existing phone contacts. Current contact suggestions based on mutual friends, or based on proximity, can expose minors to adult strangers.

Improve moderation and reporting effectiveness and consistency

Improve moderation and reporting effectiveness and consistency. Reports of inappropriate content or behavior are often not acted upon promptly, allowing extreme or violent material to reach minors and predators to continue operating.

Expand Family Center functions

Expand Family Center functions to allow parents to see what their children are exposed to on Stories and other areas of the app; send alerts to parents when their children add or remove friends, settings are changed, or sexually explicit images are attempted to be sent or received.

Fast Facts

Apple App Store: 13+; Google Play: T (Teen) Bark : 16+; Common Sense Media: 16+; Snapchat Policy: 13+

A 2025 Pew Research survey found 55% of U.S. teens have ever used Snapchat and 46% use it daily.

Snapchat (37%) was identified as the #1 platform used to communicate threats among young people who had experienced sexual extortion as a minor.

A 2025 survey in the UK found Snapchat (29%) was the #3 platform where children were exposed to pornography. Among those who had seen AI-generated pornography, 13% reported seeing it on Snapchat.

Resources

Report suspected child sexual exploitation to the National Center on Missing and Exploited Children (NCMEC) Cyber Tipline

NCMEC’s Take It Down service: Resource for minors to remove their sexually explicit content from online platforms

App Danger Project: Snapchat

Stop Non-Consensual Intimate Image Abuse (StopNCII) – Resource for adults to remove image-based sexual abuse from online platforms

Protect Young Eyes: Snapchat App and Parental Control Review

Recommended Reading

Sky News:

Survivor of online child abuse shares story as 'deeply shocking' rise in crimes revealed

CBS News:

Chicago area man sentenced to 37 years in prison for sexually exploiting nearly 100 girls

The Hour:

CT resident who lured child for sex act has history of using Snapchat to find victims, warrant says

Alaska Beacon:

Alaska lawmaker’s chief of staff arrested on sex trafficking and child exploitation charges

Updates

Videos

Playlist

59:16

2:07

17:20

3:14

3:35

23:17

2:18

0:42

Share!

Help educate others and demand change by sharing this on social media or via email!

Spread the word to hold Big Tech accountable. Use these free resources to post on social media or share via email. Your voice can create change!